Cortex Envy

Bringing up Baby Einstein

Mark Dery

Cortex envy—the haunting fear that someone, somewhere, may be smarter than you are—was my birthright. When I was little, my mother (last seen protesting her high IQ to a gerontologist, just before Alzheimer’s hit the DELETE key on her mind) liked to tell me my Marvel Comics origin story: how she acquired target on my future father because she knew he was bright, she knew she was bright, and it only stood to reason, therefore, that do-it-yourself eugenics would produce a wunderkind.

My parents divorced shortly after I was born, but what of it? Decades before the Nobel Prize sperm bank, my mother had genetically engineered a brainchild all her own, named—in what might charitably be called an excess of optimism—Mark Alexander, after the Roman emperor Marcus Aurelius and the Greek conqueror Alexander the Great.

Psychologically, the expectation that I would live up to my namesakes’ reputations—world domination, a breezy way with the gnomic one-liner, burial in a solid gold sarcophagus while legions wept—and that I would do so by dint of my supposedly prodigious intellect proved almost unbearable, saddling me with an Anxiety of Influence so crushing it inspired suicidal ideation before I was out of short pants. (How many Baby Einsteins are dragging this cross, I wonder?)

A precocious reader, I was devouring comics before kindergarten; by grade school, I was reading voraciously, omnivorously, driven by the lash of great expectations (“He isn’t living up to his potential” was a tongue-clucking refrain in parent-teacher conferences).

Undaunted by the illimitable vastness of things, I dreamed, half-seriously, of knowing everything. I compiled lists of every jawbreakingly polysyllabic or vanishingly arcane word I encountered in my reading. What better way to prove you’re the Smartest Boy in the World—or a pluperfect little asshat—than to drop a vocabulary bomb like “ovine hebetude” in the lunchroom or, better yet, before an audience of overawed adults?

I wanted to be Gary Mitchell when I grew up. Mitchell is the mutant helmsman, in the Star Trek episode “Where No Man Has Gone Before” (1966), whose exposure to a “magnetic space storm” endows him with godlike psionic abilities, goth-tastic silver pupils, and, not incidentally, geometrically multiplying brainpower. Sucking information out of the starship’s memory banks faster than the computer can deliver it (an experience that practically gives the machine a microstroke), he’s the instant master of every thinker he encounters. Spinoza? “Once you get into him, he’s rather simple,” says Mitchell. “Childish, almost.”

Meanwhile, in the parallel world of 1970s Earth, my stepdad and I were locked in the Freudian version of Ultimate Cage Fighting, a passive-aggressive slapfest that pitted my Oedipal desire to slay the father against his Cronus Complex.[1] Raging across indoor theaters of war, from the dinner-table to the so-called “family room” (a shrine to the rabbit-eared god of domesticity, the TV), we re-enacted the beatdown from the final minutes of “Where No Man Has Gone Before,” when Mitchell and another mutant trade thunderbolts, pausing between rounds to give each other the shiny silver stinkeye.

Our fraught psychodynamic had its origins in my mental caricature of my stepdad as Conan the Vulgarian, a life-of-the-party lowbrow with an unconvincingly hearty belly laugh and an Archie Bunkerian fondness for “Polack” jokes; a man who rejoiced in the hillbilly hijinx of the comedy show Hee Haw; a man whose literary tastes ran to “hard” SF for ham radio buffs and, yes, the orientalist gore porn of Robert E. Howard’s Conan saga.

Internalizing my mother’s aspirational bohemianism—a suburbanite’s dream of the beatnik life, macraméd out of Joan Baez and cinderblock bookshelves, Rod McKuen and community-college pottery—I claimed the top of the taste hierarchy as my birthright and consigned my stepdad to the bottom rung of the social Darwinian ladder. When our hostilities escalated to physical violence—he backhanded me, knocking me to the floor—I saw it as a Clash of Civilizations: Brahmin versus barfly. And, crucially, smart versus d’oh!, because the highbrow/middlebrow/lowbrow strata in the pyramid of taste cultures correlate not only to class but to IQ, befitting their roots in the racialized anthropology of the nineteenth century.

Twenty years after my mother and stepdad divorced, he sent me a mea culpa, confessing he’d “bullied” me out of intellectual insecurity. “I felt challenged intellectually,” he wrote. “It became a contest to see who was smarter, me or the kid.”[2] Apparently, I had taken an IQ test, on the eve of junior high, to qualify for placement in what was then called the “gifted children” program,[3] and my score had been perilously close to his, maybe higher. He would never know for certain, he said, because the administrator who scored the test wouldn’t release my numerical score, merely confirming that I had scored “well within the qualifying range for the program, which required an IQ of at least 140.”[4] Cortex envy was an auger that turned in his head.

• • •

I’m sitting in a Manhattan apartment, across a small table from Nate Thoma, a PhD candidate in clinical psychology at Fordham University, who is dispassionately administering the Wechsler Adult Intelligence Scale test, third edition (the WAIS-III, pronounced “wayce” by those in the field). I’m taking the test partly because I want to banish the specter of unfulfilled promise, once and for all, by outscoring my Inner Child Prodigy (in other words, I want to see who’s smarter, me or the kid),[5] and partly because guinea-pigging yourself makes for good stunt journalism.

Published in 1955, the WAIS was David Wechsler’s new and improved version of his Wechsler-Bellevue Intelligence Scale, which he had developed in 1939 while serving as chief psychologist at the Bellevue Psychiatric Hospital in New York City. Dissatisfied with existing intelligence tests, which had been designed with children in mind, Wechsler created a neuropsychological exam better suited to diagnosing his adult patients. In doing so, he repurposed elements from existing instruments such as the Binet-Simon Scale—the Ur-IQ test, introduced in France in 1905 by the psychologists Alfred Binet and Théodore Simon for the diagnosis of what would now be called “special ed” children—and the US Army’s Alpha exam, designed by Robert Yerkes during World War I to identify prospective officers and weed out “intellectual defectives,” as Yerkes put it in the delicate parlance of the day.[6]

Today, the WAIS-III and its counterpart, the Wechsler Intelligence Scale for Children, or WISC, are the most widely used of the so-called IQ tests, superseding the once-universal Stanford-Binet.[7] Defining intelligence as “the global capacity of a person to act purposefully, to think rationally, and to deal effectively with his environment,”[8] Wechsler conceived of it as a constellation of mental abilities, a departure from the prevailing theory, articulated in 1904 by the English psychologist Charles Spearman, that intelligent behavior is attributable to a single, underlying cognitive factor, a theoretical entity that Spearman dubbed g (for general factor).

In keeping with Wechsler’s belief that intelligence is multifactorial, the WAIS divides mental abilities into two hemispheres, so to speak: Verbal IQ and Performance (non-verbal) IQ, which it subdivides into four indexes: Verbal Comprehension and Working Memory on the Verbal side; Perceptual Organization and Processing Speed on the Performance side.

The Verbal Comprehension subtests include a cultural-literacy quiz whose unabashed Eurocentricity would gladden the heart of E.D. Hirsch (Who wrote Hamlet? Who painted the Sistine Chapel?); a test of abstract reasoning in which you’re given two terms—“piano” and “drum,” “orange” and “banana”—and asked what they have in common; and “Vocabulary,” in which you’re asked to define a series of increasingly complex words. (For what it’s worth, vocabulary, of all the subtests, has the highest correlation with Full Scale IQ.) The Working Memory section includes arithmetic problems; “Digit Span,” which involves memorizing strings of up to nine random digits and reciting them forward and backward; “Letter-Number Sequencing,” in which you’re required to memorize a long, utterly random alphanumeric sequence, on the spot, then repeat it back to the administrator with the numbers in numerical order and the letters in alphabetical order; and “Comprehension,” a vaguely named catch-all that tests your common sense as well as your grasp of the cultural logic behind laws and customs: Why is it important to have child labor laws? If you found an envelope on the street that was sealed and had a new stamp on it, what would you do? Why do people who were born deaf have difficulty learning spoken language?

In the Perceptual Organization and Processing Speed indices of the WAIS’s Performance IQ section, you’re tasked with “Block Design” (recreating geometric patterns using color-coded blocks—a test of spatial perception, abstract visual processing, and problem solving); “Picture Completion” (supplying the missing detail in an image, which measures the ability to rapidly process visual details); and “Picture Arrangement” (assembling a series of wordless, comic strip-like panels into logical narrative sequences, an activity that ostensibly measures an amorphous attribute called “social judgment,” although an authoritative source concedes that “data validating its use as a measure of social judgment has not been forthcoming”[9]).

• • •

On the Day of Psychometric Reckoning, I arrive girded for battle, heavily armored with social-constructionist skepticism about psychology’s claims for the scientific objectivity and empirical validity of intelligence testing.

For much of the twentieth century, psychometric testing served as what Foucault would call a societal “disciplinary mechanism” on behalf of the established order, surveilling the mass mind and policing the norms of industrial society. Such tests assisted in the Taylorization of the American intellect, opening doors for obedient workers and unquestioning soldiers, herding minorities into remedial classes and menial jobs, pathologizing and in some cases even criminalizing dissidents and deviants (homosexuals spring immediately to mind).

More than any other psychologist, it was Lewis Terman who transformed the IQ test into an instrument of social engineering. In 1916, Terman introduced the Stanford-Binet Scale—essentially the Binet-Simon Scale standardized using a large American sample and equipped with a new means of comparing individual performance to group norms (the now-mythic “intelligence quotient”).[10] In the Stanford-Binet, Terman handed examiners a scale for weighing the worth of any intellect—and, based on the numerically scored results, assigning each American his proper place in the socioeconomic scheme of things.

In 1917, Yerkes repurposed the test for his US Army exams, evaluating millions of recruits and, more important, introducing America to the IQ test. “Despite the army’s dim view of intelligence tests and their practical relevance,” notes Stephen Murdoch in IQ: A Smart History of a Failed Idea,

Robert Yerkes, Louis Terman, and [their] colleagues used the war to catapult their careers and field. From then on, American students have taken IQ tests and their standardized test progeny, such as the SAT and graduate school entrance exams. ... Terman and Yerkes’ biggest accomplishment was not convincing the army to test recruits, but persuading America of the usefulness and success of the army tests, despite the dearth of supportive evidence. At war’s end, Terman said he was immediately “bombarded by requests from public school men for our army mental tests in order that they may be used in public school systems.”[11]Terman oversaw the creation of the National Intelligence Tests for grades three to eight. Unlike the Binet-Simon, which was designed to help underachieving children receive specialized instruction intended to bolster their performance, Terman’s grade-school IQ tests were designed, in the Brave New World of postwar America, to divert Semi-Moronic Epsilons and mega-brainy Alpha Pluses into their appropriate career paths, a sorting process known as “tracking.”

For much of their history, intelligence tests have been rotten with the cultural and class biases of their makers, a diagnostic deck stacked against minorities, immigrants, and those at the bottom of the wage pyramid. Test designers have equated English-language fluency with intelligence, presumed a familiarity with upper-class pastimes such as tennis, and expected the examinee to provide the word “shrewd” as a synonym for “Jewish.” As late as the 1960 revision, the Stanford-Binet was presenting six-year-old children with crude cartoons of two women, one obviously Anglo-Saxon, the other a golliwog caricature of an African-American, with a broad nose and thick lips. The test accepted only one correct answer to the question, “Which is prettier?”[12]

Terman begrudgingly conceded that environmental factors might play some small part in IQ-test scores. For the most part, though, he was a thoroughgoing hereditarian. Like the Victorian psychologist Francis Galton (the founding father of eugenics, whom he devoutly admired), Terman believed that intelligence was inborn and unalterable; DNA was destiny. “High-grade or border-line deficiency ... is very, very common among Spanish-Indian and Mexican families of the Southwest and also among negroes,” he notes, in The Measurement of Intelligence (1915). “Their dullness seems to be racial. ... Children of this group should be segregated into separate classes and be given instruction which is concrete and practical. They cannot master abstractions but they can often be made into efficient workers.”

At the very moment that intelligence testing was sanctifying the race-based educational neglect of blacks, Mexicans, and other textbook examples of the “defective germ plasm,” legislatures in thirty-three states were writing the compulsory sterilization of the “unfit” into law, a stroke of the pen that would lead, over time, to the coerced sterilization of sixty thousand Americans.[13] The black stork of the eugenics movement was spreading its wings across America, and in much of the era’s officially sanctioned bigotry, the IQ test was a silent partner. “While America has had a long history of eugenics advocacy,” notes the historian Clarence J. Karier, “some of the key leaders of the testing movement were the strongest advocates for eugenics control. In the twentieth century, the two movements often came together in the same people under the name of ‘scientific’ testing.”[14]

Terman was Exhibit A for Karier’s case: a pioneer of cognitive testing and chair, for two decades, of the Stanford psychology department, he was also a founding member, in 1928, of the Pasadena-based Human Betterment Foundation, a well-funded eugenics group that crusaded vigorously for the compulsory sterilization of the “insane and feebleminded” patients in California state institutions. In The Measurement of Intelligence, he lamented American resistance to the modest proposal that the intellectually unfit (as determined by IQ testing, of course) “should not be allowed to reproduce, although from a eugenic point of view they constitute a grave problem because of their unusually prolific breeding.”[15] Nonetheless, the Foundation’s efforts gave aid and comfort to racial hygienists across the Atlantic, most notably a certain disgruntled Austrian corporal with a Chaplinesque moustache, who cited California precedent in support of Nazi eugenics policies: “I have studied with interest the laws of several American states concerning prevention of reproduction by people whose progeny would, in all probability, be of no value or be injurious to the racial stock.”[16]

• • •

Knowing what a blunt instrument the IQ test is, what a dark and storied history it has, why am I so nervous about taking the WAIS? Why am I so inordinately proud when I knock a few softball pitches—What is the speed of light? Where were the first Olympics held? Who was Catherine the Great? What is the Koran?—out of the park? Why do I experience a near panic attack when I can’t name three kinds of blood vessels or (to my undying chagrin) the seven continents? Worst of all, why am I so damnably relieved when the PhD candidate who administered the test and scored my performance tells me, “You did quite well. If you turn to page two, you can see where it says ‘IQ scores.’”

“Quite well” is where we’ll leave it, drawing the curtain of modesty across my Full-Scale IQ (calculated by combining the examinee’s Verbal and Peformance scores). But since I’m guinea-pigging myself for your delectation, dear reader, I will confide that my examiner, Nate, characterized my Verbal IQ as “extremely high.” My Performance IQ, on the other hand, was “very average” (a phrase I’ll treasure forever for its droll use of the intensifier). Am I one of nature’s little cognitive jokes, an idiot savant with the Verbal IQ of the OED on DMT and the Performance IQ of a stump?

Nate replies that, given my cognitive lopsidedness, Full-Scale IQ score simply isn’t an accurate representation of my cognitive functioning. “It’s more accurate to look at it in terms of multiple intelligences,” he says—different skills. Apparently, cases like mine are far from uncommon. Partly in recognition of that fact, cognitive psychology has undergone a paradigm shift in recent decades, from Spearman’s notion of a general intelligence (g) affecting performance in every area of cognitive functioning to the Gardnerian model of multiple intelligences, each comprised of subintelligences, which may interact synergistically. Which is reassuring, although it sounds uncomfortable close to one of those pop-psych homilies about self-esteem, here in the land where All Men are Created Equal but All of the Children are Above Average. This, I feel, is the time to confide to Nate, the psychotherapist in training, that for much of my life I’ve been gnawed by the neurotic suspicion that my idiosyncratic use of language (rarified vocabulary, arcane allusions, literary syntax), coupled with a fondness for “intellectual” subjects, creates the illusion of intelligence in a society with a pronounced logocentric bias.

Nate doesn’t exactly steeple his fingers, but he does modulate into the intense, reflective key familiar from Analysts I Have Known. “I’m glad you brought that up,” he says. “It’s an important responsibility of the person sharing the interpretation of the results to help a person make personal meaning of the results, especially something that is so fraught as IQ.” Most laypeople aren’t aware that IQ tests are most commonly used in a neuropsychological context, he explains, to determine the cognitive effects of, say, a stroke or traumatic brain injury—vital information in devising a treatment strategy for the patient. “IQ tests are never used to just find out someone’s IQ—their rank in the world,” Nate says.

Nonetheless, Terman’s restless specter haunts the popular imagination. In the public mind, he notes, the IQ test is still seen as an implacable, infallible measurement of “your core being—the essence of your intelligence—which in our society is also your rank.” Which is precisely why the social critic Walter Lippmann decried the IQ test as cant in a lab coat, its hereditarian pseudoscience pernicious to democracy. “I hate the impudence of a claim that in 50 minutes you can judge and classify a human being’s predestined fitness in life,” he wrote. “I hate the sense of superiority which it creates, and the sense of inferiority which it imposes.”[17]

In a 1922 debate with Terman in the New Republic, Lippmann put his finger on the Achilles’ heel of intelligence testing: “We cannot measure intelligence when we have never defined it.”[18] Psychometricians hotly deny this, but close examination reveals that definitions of intelligence remain fuzzy around the edges. Too often, psychologists have taken refuge in a tautology, defining intelligence as what intelligence tests measure. In 1923, the Harvard professor Edward Boring soberly asserted, “Intelligence as a measurable capacity must at the start be defined as the capacity to do well in an intelligence test.”[19]

Of course, this assumption presumes that test items correlate, in some empirically verifiable way, to whatever intelligence truly is. Yet test items are indelibly stamped with cultural values, such as logocentricity, the importance of conforming to social norms or, be it said, test-taking skills—specifically, the ability to excel at intelligence tests. “The intelligence test ... does not weigh or measure intelligence by any objective standard,” argued Lippmann. “It simply arranges a group of people in a series from best to worst by balancing their capacity to do certain arbitrarily selected [emphasis mine] puzzles, against the capacity of all the others. ... The intelligence test, then, is fundamentally an instrument for classifying a group of people, rather than ‘a measure of intelligence.’”[20]

• • •

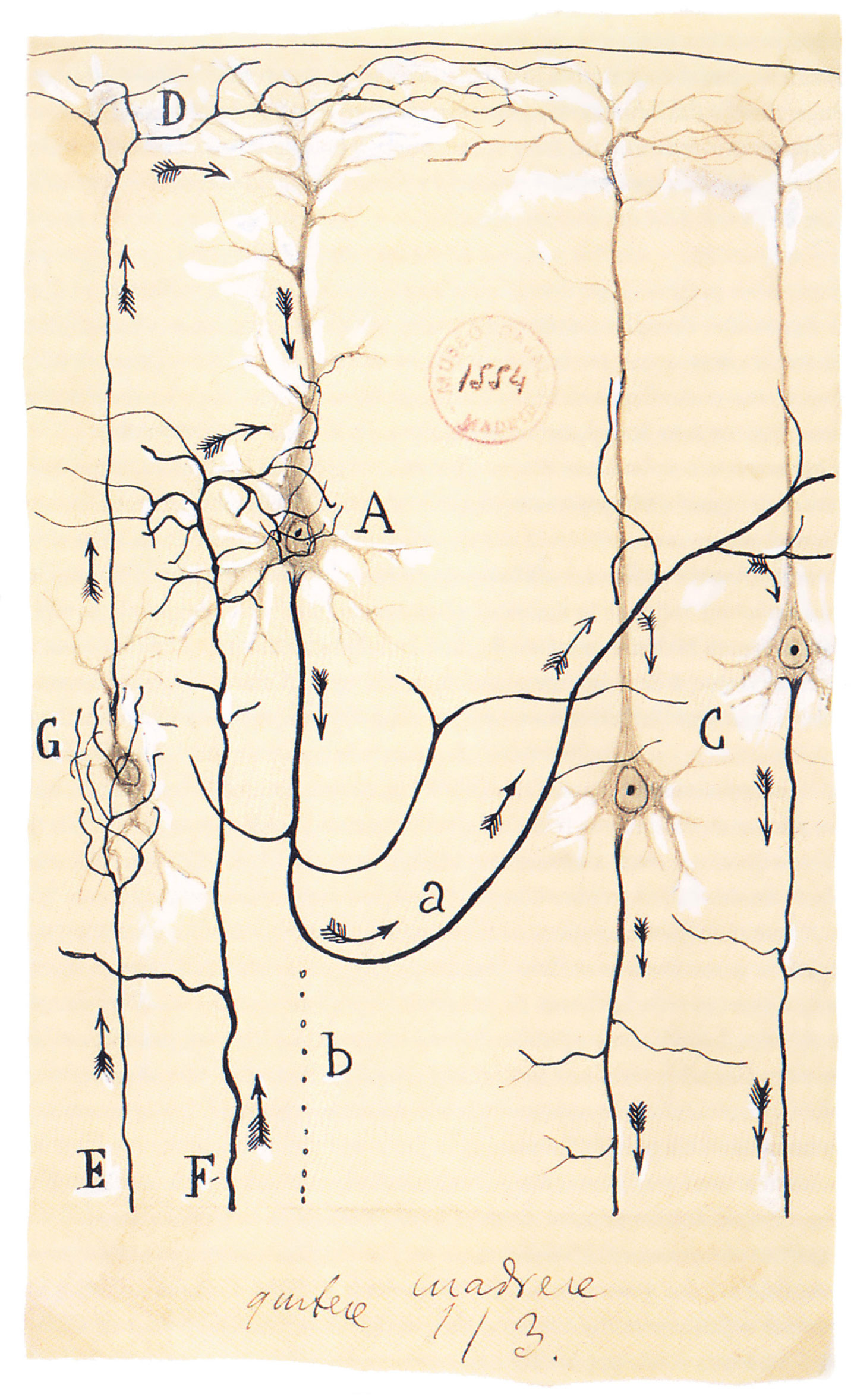

Maybe intelligence is a connectionist phenomenon, an emergent property of the complex system we call mind. Maybe it’s the ability to draw lines of connection between far-flung scraps of information and insight, constellating new meanings out of thin air; to discover the intertextual wormholes connecting parallel universes of knowledge and experience; to map the labyrinth of subtextual tunnels secretly connecting all stories and histories. Or is the notion of hidden meanings and buried connections just some epistemological metafiction—Nabokov’s idea of Intelligent Design, Eco’s answer to conspiracy theory? Is the intellectual tendency to Always Connect, and to equate that with intelligence, just a cognitive echo of the neurological fact that our thoughts travel on dendritic networks—a saner apophenia?

Shaded by a broad umbrella, I’m sitting at a patio table on a bright, blank Southern California morning, in the assisted-living facility where my mother lives. I’m looking into her Alzheimer’s-glazed eyes, searching their brown nothingness for any vestige of her mind’s big bang—the cognitive equivalent of background radiation, neutrinos, anything. Mute, gazeless, she seems oblivious to her surroundings: the proverbial empty house, lights on, nobody home. The god of irony has seen fit to wipe the mental hard drive of this woman who venerated the intellect. It occurs to me that her enduring gift to me, her failed attempt at engineering the World’s Smartest Boy, may be (another irony!) a line of genetic code that even now could be triggering amyloid plaques and neurofibrillary tangles, unplugging the neural connections in my brain one by one. My mother is pointing. She makes a clotted noise. It could be the word “pattern.” It could be an infantile glub. I follow her gaze, up, to the umbrella’s underside. I hadn’t noticed it, but the open blossom of the spreading canopy is covered with a reticulate pattern—intersecting lines that branch and branch and branch again, in an almost fractal way. Dendrites, I think.

- Loosely defined, the Cronus Complex is the psychodynamic in which a father emulates the tyrannical behavior of his father, “devouring” (i.e., psychically dominating) his children—specifically, his male children—to forestall any challenges to his patriarchal authority. Franco Fornari defines the Cronus Complex as “the inverse of the Oedipus Complex. It consists primarily in the father’s unconscious hostility and rivalry in relation to his sons, and in his unconscious wish to castrate, humiliate, and annihilate them.” See Fornari, The Psychoanalysis of War (Garden City, NY: Anchor Press, 1974), p. 13. The complex takes its name from the Greek titan who, envious of his father’s powers as ruler of the universe, castrated and deposed him. Terrified by a prophecy that his own son would follow his patricidal example, Cronus ate his offspring as soon as they emerged from the womb. See Rachel Bowlby, “The Cronus Complex” in Freudian Mythologies: Greek Tragedy and Modern Identities (New York: Oxford University Press, 2007), pp. 146–168; Warren Colman, “Tyrannical Omnipotence in the Archetypal Father” in The Journal of Analytical Psychology, vol. 45, issue 4, pp. 521–539; and John W. Crandall, “The Cronus Complex,” Clinical Social Work Journal vol. 12, number 2 (June 1984), pp. 108–117.

- Letter to the author from his stepfather (name concealed to protect his privacy), 21 August 1996.

- This is an oddly Christian name for a federal initiative rooted in America’s panic-button response to Russia’s launch of Sputnik—the prioritization of math and science, in early education, in order to recapture the techno-scientific beachhead—and front-burnered by the 1972 Marland Report to Congress, which urged “differential educational programs and/or services” for “gifted and talented children” to enable them to “realize their contribution to self and the society.” Quoted in Vicki L. Schwean and Donald H. Saklofske, eds., Handbook of Psychosocial Characteristics of Exceptional Children (New York: Kluwer Academic/Plenum Publishers, 1999), p. 403. To this ex-Protestant’s ear, “gifted” is uncomfortably evocative of the “gifts of the spirit” described in Corinthians. The implication is clear: academic excellence, rather than being the fruit of hard work, is pennies from heaven, bestowed by a capricious divinity on an undeserving child who, but for the grace of god, might just as easily have been consigned to the short bus.

- Email to the author from his stepfather, 27 February 2009, 4:01 AM.

- Bearing in mind that the WAIS is normed for age group and allowing for the precipitous decline, with age, in mental acuity.

- Quoted in Raymond E. Fancher, The Intelligence Men: Makers of the IQ Controversy (New York: W. W. Norton & Company, 1985), p. 117.

- “So-called” because, as critics of the very idea of IQ testing point out, the WAIS and other tests like it may assess specialized cognitive skills—such as, say, IQ test-taking—rather than true intelligence, the definition of which is still launching doctoral dissertations. “Most widely used” according to Randy W. Kamphaus, Clinical Assessment of Child and Adolescent Intelligence (New York: Springer Science + Business Media, 2005), p. 292.

- David Wechsler, The Measurement of Adult Intelligence (Baltimore, MD: Williams & Wilkins, 1939), p. 229.

- David S. Tulsky, Donald H. Saklofske, Gordon J. Chelune, and Robert K. Heaton, eds., Clinical Interpretation of the WAIS-III and WMS-III (San Diego: Academic Press, 2003), p. 70. This user’s guide to the WAIS contains detailed descriptions of each of the subtests, setting them in the context of their historical origins and in some cases hotly contested revisions.

- The formula for calculating an examinee’s IQ is: mental age—the chronological age implied by the score she received, based on the average score for a given age—divided by her actual age, then multiplied by a hundred to eliminate the decimal point, a concept Terman borrowed from the German psychologist Wilhelm Stern. “Because this formula works well enough when comparing children to children, but is notably less reliable for adults, since intelligence plateaus in adulthood, today’s intelligence tests have retired the IQ formula, and are normed for age group. The term IQ however, persists, unkillable as a termite. Or, if you will, Termite.

- Stephen Murdoch, IQ: A Smart History of a Failed Idea (Hoboken, NJ: John Wiley & Sons, 2007), pp. 91–92.

- See Clarence J. Karier, “Testing for Order and Control in the Corporate Liberal State” in N. J. Block and Gerald Dworkin, eds., The IQ Controversy (New York: Pantheon Books, 1976) pp. 352–353.

- Edwin Black, “Eugenics and the Nazis—The California Connection,” The San Francisco Chronicle, 9 November 2003, www.sfgate.com/cgi-bin/article.cgi?file=/chronicle/archive/2003/11/09/ING9C2QSKB1.DTL.

- Karier, op. cit., p. 344.

- Lewis Terman, The Measurement of Intelligence (New York: Houghton Mifflin Company, 1916), pp. 91–92.

- Quoted in “Hitler’s Debt to America,” an excerpt from Edwin Black’s War Against the Weak, The Guardian, 6 February 2004, www.theguardian.com/uk/2004/feb/06/race.usa. For more on this subject, see Stefan Kuhl’s excellent The Nazi Connection: Eugenics, American Racism, and German National Socialism (Oxford & New York: Oxford University Press, 1994).

- Quoted in Mitchell Leslie, “The Vexing Legacy of Lewis Terman,” Stanford Magazine, July–August 2000, www.stanfordmag.org/contents/the-vexing-legacy-of-lewis-terman.

- Walter Lippmann, “A Future for the Tests,” in The IQ Controversy, op. cit., p. 28.

- Quoted in N. J. Block and Gerald Dworkin, “IQ, Heritability, and Inequality,” in The IQ Controversy, op. cit., p. 425.

- Walter Lippmann, “The Measure of the ‘A’ Men,” in The IQ Controversy, op. cit., p. 11.

Mark Dery is a New York–based cultural critic, professor, lecturer, and freelance journalist. His most recent book is The Pyrotechnic Insanitarium: American Culture on the Brink (Grove/Atlantic, 1999). In summer 2009, he was appointed a visiting scholar at the American Academy in Rome; while in residence, he will write a short book on the “Anatomical Venuses” (wax obstetric models) in Italy’s curiosity cabinets.

Spotted an error? Email us at corrections at cabinetmagazine dot org.

If you’ve enjoyed the free articles that we offer on our site, please consider subscribing to our nonprofit magazine. You get twelve online issues and unlimited access to all our archives.